Build and deploy with a single Dockerfile

One of the subtler and fantastic parts of the DockerFile specs is the ability to copy files from one image to another. Today we'll show how to build and deploy from a single file. If we describe a very simple Dockerfile here:

FROM node:8 as builder

RUN npm install

RUN npm run build

FROM nginx:latest as http

COPY --from:builder /usr/app/dist /usr/share/nginx/htmlIf you don't know much about Dockerfile then please go read some other better intro's, otherwise you'll hopefully notice the oddity of the double FROM command. What's happening here is the following:

- A

nodemachine is spun up. - Node build the site.

- Docker then copies the

distdirectory from the Node image into thenginxserver to be hosted.

The Linux lot of you will note this technique is just an extension of the make-it-yourself paradigm, but in a way that's seperate of the usual build-dependency chaos.

But could we go faster?

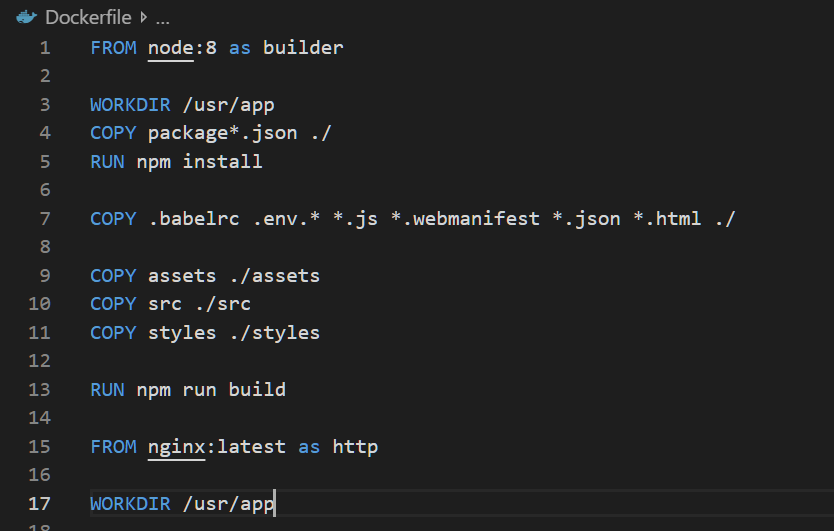

That's it really, we have an image that deploys a node project. But I use this model in my CI and waiting for a full NPM install on a major project is a pain, so I've split the RUN npm run build step into a two stage process:

FROM node:8 as builder

COPY package*.json ./

RUN npm install

COPY .babelrc .env.* *.js *.webmanifest *.json *.html ./

COPY src ./src

RUN npm run build

FROM nginx:latest as http

COPY --from:builder /usr/app/dist /usr/share/nginx/htmlSee Docker has an very powerful cache layer, allowing any step to be skipped if every prior step was identical in it's input. The theory being, is that if the package.json file hasn't changed, then both the COPY and RUN npm install step's are skipped allowing it to only worry about the more likely source change.

But what about nginx's configuration?

This is the even cooler and slightly contentious bit. A bigger project would argue that the following is a bit dirty, but if you appreciate my side-projects are a solo show then you might forgive me.

All my services describe how they're hosted and their configuration, within their repo. So by that I mean my code contains both the source, and the configuration code describing the hosting process, meaning they're responsible all the way up to responding correctly on 80.

That means the nginx.conf sits next to my projects source code(a sys-admin wretches in the corner). This inversion of duty is frankly amazing to run solo. I can run every project both locally and on a cloud server with literally the same build pipeline. On the cloud it's behind an HTTP's load-balancer to provide TLS, and locally I simply connect to an exposed 80.